Many different kinds of software are available today, so speed is crucial for the life-cycle management of an application. The majority of the most well-known software companies distribute updates for their software, making software testing and release management a daily activity.

All these companies need to have the best management possible, otherwise, their productivity will decrease. So they need to develop long-term plans and strategies, involving stakeholder communication and resource allocation, to maintain their software.

Companies can accomplish that consistency by using Application Lifecycle Management (ALM). ALM tools assist companies in making better choices for their software engineering projects, thus enabling them to effectively sustain the software and manage its software architecture.

Application Lifecycle Management (ALM) is similar to Product Lifecycle Management (PLM), with the difference being that application lifecycle management is used in applications. ALM incorporates all the key components of an application’s life-cycle, ranging from acquiring the starting requirements to the application’s maintenance. The life-cycle ends when the application stops being used, marking the phase for discontinuing the application.

This article will examine some of these aspects of application lifecycle management, as well as the advantages of using ALM, risk management, and the necessary tools.

What Exactly Is ALM?

Simply put, ALM is the process of creating and maintaining an application until it’s no longer used. So, anything from the initial idea to customer support is part of the application lifecycle management. ALM involves every member of your team, along with any tools they use, which could include a mix of Agile Methodology and DevOps development approaches. Any actions taken by the team to manage the application are also part of ALM.

In theory, application lifecycle management enables improved communication during the lifetime of the application, which allows every department to collaborate with the development teams easily and efficiently.

By using active lifecycle management, you can implement both agile and DevOps development approaches, because your different departments can cooperate more efficiently.

Some people confuse application lifecycle management (ALM) with the software development life cycle (SDLC). While they may appear similar, the essential difference is that ALM consists of every stage of an application’s lifetime. SDLC includes only the development side of an application, so SDLC is just one part of ALM.

What Does Application Lifecycle Management Consist Of?

Application lifecycle management covers many different departments, including:

- Project Management

- Application Development

- Requirements Management

- Testing and QA

- Customer Support

Application lifecycle management can be divided into different phases, or it can be a constant procedure, depending on your development process (e.g., waterfall, agile, or DevOps).

No matter which process you decide upon, ALM is divided into four distinct parts:

Administration, Development, Operations, and Maintenance.

Application Administration (Defining Requirements and Designing)

The Application Administration is an essential phase of the ALM. It is also known as the “requirements definition and design” phase. If you decide to use DevOps, this is the stage where you plan and create.

Generally, you collect any requirements for the application in this stage, ranging from the client’s requirements to the compliance requirements from governing bodies. Gathering the requirements is a unique process, usually where the general requirements are met first, followed by more precise ones.

Application Development

The second stage of the application lifecycle management is development, also known as the Software Development Life Cycle (SDLC), a key component of ALM.

This stage of application development consists of the following:

- Planning: Establishing project management strategies and long-term plans

- Designing: Involving software architecture and user stories

- Creating: Actual coding of the application

- Testing and QA: Quality Assurance procedures to validate the application

- Publishing: The release management and deployment of the app

- Maintaining: Continuous sustainment of the application based on feedback and performance metrics

The development of the application begins after you’ve decided and set the requirements, employing requirements management, and at this stage, your application is brought to life using Agile methodology or DevOps approaches, depending on your choice.

The development team needs to deconstruct the requirements into smaller ones. This is how the team will create a development plan they’ll follow throughout the whole software engineering process. It’s recommended to place representatives on each of the teams during this process, ensuring that the application goes through development smoothly. These steps might differ depending on the development approach you choose, like waterfall or Agile. The tasks of the development team include:

- Designing the application, based on the needs of the users

- Finding the software architecture

- Coding the application

- Managing changes: Change management

- Conducting version control

- Managing configurations: Configuration management

- Testing and QA

- Managing different builds

- Managing the official release and sustaining the final application

Application Operations

This stage is concerned with maintaining the final application. In DevOps, this stage includes the release, the configuration management, and the monitoring of the application. This phase investigates potential bugs and their fixes. By fixing these, you organize how the release updates for your application will be managed through active lifecycle management.

Application Operations cover all processes involved with the maintenance and KPI monitoring of the application, including performance measurement. The application Operations start once the application has been published. They’ll end only when the application comes to the end of its lifecycle, which involves business analysis and ROI considerations.

The tasks of the applications operations team include:

- Customer Support

- Reporting

- Performance Monitoring

- Security Monitoring: Part of Risk Management

Consistent Maintenance And Improvement Of The Application

As a general rule, the longest part of the application lifecycle management is its maintenance. Neither the development teams nor the users are generally involved during this continuous procedure.

During this whole process, the application is supported by a support team, who will solve any problems that occur. Any maintenance and improvement is done after the application is deployed, so the performance of the application can be systematically reviewed.

Any bugs that remain will be discovered and fixed during this stage. If the development team has done a great job, their help is not required. This aligns with quality assurance practices.

A key part of this process is deciding when the application and project should be retired.

Then, they’ll be able to work on newer versions of the application, or the product could be changed to something completely different through a business case evaluation.

Why Is ALM So Important

If you want to deploy quality releases on time, implementing Application Lifecycle Management (ALM) is essential for achieving software quality and timeliness.

It allows you to place the correct requirements.

Using requirements management, you can ensure that these goals are met. This not only aids the development team but also improves the overall project management of the application. Remember to always perform Testing and QA as the process unfolds.

The best way to implement ALM is by using ALM tools designed for active lifecycle management. There are many excellent tools on the market compatible with various development approaches such as Agile or DevOps.

Combining ALM tools with a skillful development team will make your product stand out and deliver exceptional ROI.

You can use ALM with any development approach.

ALM serves as the foundation and its features depend on your chosen development approach. Whether you use traditional methods like Waterfall or more modern frameworks like Agile, ALM is fundamentally about creating, integrating, and sustaining a software product through continuous procedures.

ALM adopts a holistic approach.

Every process in ALM, from designing to maintaining, can be run individually but they are interdependent to produce the best possible product.

This is true not only for ALM-based applications but also when integrating non-ALM systems for the efficient functioning of your business.

ALM encourages cross-team communication and collaboration, enhancing the software engineering process across different departments.

The Benefits Of Application Lifecycle Management

ALM provides multiple advantages, and some of the most significant benefits include:

Improvement on Employee Satisfaction, Productivity, and Usage

It significantly aids the development team and impacts the Software Development Life Cycle (SDLC) positively.

By leveraging ALM, teams can choose tools they prefer, thus boosting employee satisfaction and productivity.

Allows for Real-Time Decision-Making

ALM supports real-time planning and version control, giving development teams a competitive edge. This enables them to “predict” the application’s future through business analysis, facilitating early planning and continuous improvement of the application.

Collaborating with Multiple Teams

For global organizations, ALM becomes a necessity as it enables seamless communication without network disturbances. ALM tools keep everyone involved in the development phase updated about the status and changes in project strategy, making it crucial for risk management.

Boosts Development Speed and Agility

In today’s highly competitive market, speed and agility are paramount. ALM enables rapid software development, allowing businesses to remain competitive.

Enables Tests and Solutions

ALM tools offer a platform that supports both development and testing phases of your application. When the testing team finds bugs, they can immediately inform the development team to start crafting a solution, enhancing the quality assurance practices.

Assists Businesses in Planning More Effectively

With ALM, projects are initiated with proper methodologies and resource planning. Depending on your development approach, you’ll have access to tools tailored for either a Waterfall or Agile project.

Acquiring and Sustaining Highly Satisfied Customers

Customer satisfaction is vital for any business. ALM excels in this area by offering an integrated system for collecting customer feedback, which is then communicated to the relevant teams for product improvement.

Agile VS Waterfall Approaches in Software Development

Agile software development is rapidly increasing in popularity, transforming software engineering practices. This modern approach has revolutionized how teams manage the application lifecycle management (ALM), offering a different take on continuous procedures and project management.

The Agile methodology has effectively replaced the more traditional Waterfall methodology, and has updated all stages of ALM, from requirements management and planning to maintenance.

What Is The Waterfall Methodology?

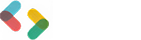

The Waterfall methodology is more traditional and is also known as the Linear Sequential Life Cycle Model. This approach aligns more with sequential order and linear progression, which is integral to its project management strategy.

In a Waterfall project, the team will progress to the next stage only when the current step is completed.

Each stage is done only once, emphasizing risk management and quality assurance. The journey begins with requirements identification, followed by the development phase, deployment, and ultimately, maintenance.

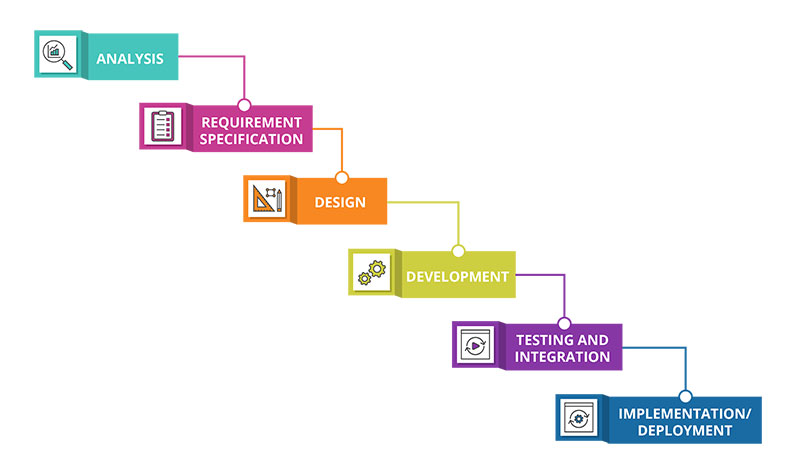

What Is The Agile Methodology?

The Agile Methodology is significantly different from Waterfall. Any development and testing are conducted simultaneously, a practice that is aligned with real-time planning and active lifecycle management.

This is beneficial as it fosters improved cross-team communication between developers, customers, and managers.

This Agile approach encourages teams to continually validate their work, meaning designing to testing is revisited multiple times during the development phase for continuous improvement.

Application Lifecycle Management (ALM) Tools

ALM tools are employed to automate and optimize the development and deployment process of software applications. These tools are essential for achieving business analysis and software quality goals.

Key features of ALM tools include:

- Requirements management

- Estimation and planning

- Source code management

- Testing and Quality Assurance (QA)

- Deployment or DevOps

- Oversight of multiple application lifecycle management phases

- Traceability between different phases

- Record of entities’ history (i.e., change management—who altered what and when)

- Maintenance and support

- Version control

- Application portfolio management

- Real-time planning and team communication

Every ALM tool also functions as a project management tool, facilitating high-quality communication between teams, a must-have for efficient functioning and ROI.

Depending on your development process—whether it’s Waterfall, DevOps, or Agile—your chosen ALM tool should be compatible and assist in your software engineering practices.

Some of the most well-known ALM tools are the following:

- Atlassian Jira

- IBM ALM solutions

- Rally (formerly known as CA Agile Central)

- CollabNet VersionOne

- ALM Octane

- Rommana ALM

- Jama Software

- SpiraTeam

- Perforce Helix ALM

- Microsoft Azure DevOps Server

- Tuleap

- TeamForge by CollabNet

- Basecamp

FAQs about Application Lifecycle Management (ALM) and Software Engineering Practices

1. What is Application Lifecycle Management (ALM)?

An application’s whole lifecycle, from creation to retirement, is managed using a structured collection of procedures and tools known as Application Lifecycle Management (ALM). ALM is crucial for efficient functioning and ROI in software development.

ALM involves organizing and monitoring the activities of software developers, testers, project managers, and other stakeholders, facilitating cross-team communication and active lifecycle management.

2. What are the stages of the ALM process?

The requirements management, design, development, testing and quality assurance (QA), deployment, and maintenance phases make up the ALM process.

Each stage comprises a separate set of tasks, resources, and individuals to ensure the software is developed, tested, and deployed in a systematic and controlled manner.

3. What are the benefits of implementing ALM in software development?

ALM can enhance software quality, reduce development cycles and costs, and improve stakeholder engagement and communication.

Moreover, it ensures regulatory and industry compliance, offering a framework for risk management and problem-solving throughout the development process.

4. What is the role of ALM in DevOps?

ALM plays a significant role in DevOps by offering a unified platform for managing the entire software engineering practice, including development and deployment. ALM helps to automate and streamline the process, ensuring quality, security, and compliance.

5. What is the difference between ALM and Software Development Life Cycle (SDLC)?

ALM covers an application’s entire lifecycle, including planning, design, development, testing, deployment, and maintenance.

While SDLC particularly refers to the development phase, it also encompasses requirements gathering, design, coding, testing, and deployment.

6. How does ALM help in managing risks in software development?

ALM offers a risk management framework to detect, evaluate, and manage risks throughout the software development process.

This framework allows organizations to proactively mitigate potential risks and ensure the software is developed and deployed in a regulated and secure manner.

7. What are the best practices for implementing ALM in an organization?

The best practices involve selecting the appropriate ALM tools and technologies, defining a precise and consistent process, including all stakeholders, developing metrics for success, and routinely assessing and refining the process for continuous improvement.

8. How does ALM integrate with agile software development methodologies?

ALM can be integrated with Agile methodologies by incorporating agile practices and principles at each level of the ALM process. Agile ALM employs iterative cycles, frequent feedback, and communication between stakeholders to ensure the product meets user needs and expectations.

9. What tools are available for ALM?

Various ALM tools, from testing and deployment automation tools to integrated development environments (IDEs) and project management software, are available.

Microsoft Visual Studio, Atlassian JIRA, and IBM Rational are some of the well-regarded ALM technologies.

10. How does ALM facilitate collaboration among developers, testers, and other stakeholders?

ALM simplifies cross-team collaboration by providing a single forum for communication, documentation, and feedback among developers, testers, and other stakeholders.

By involving everyone in the ALM process, organizations ensure that project objectives and goals are met, and that risks and issues are addressed in a timely and efficient manner.

If you enjoyed reading this article on what is application lifecycle management, you should check out this one about software development budget.

We also wrote about a few related subjects like hire a web development team, ALM tools, how to hire a web developer, how to write an RFP and web development companies in Serbia.

- Business model vs business plan: What’s the difference between them - February 6, 2024

- What Is A Project Management Framework? (Must Read) - October 15, 2023

- What is an IT Project Manager and What Do They Do? - October 11, 2023