The world is steadily progressing further and further into the digital age. Many people use the digital tools now available to advance their businesses.

Digital advertising is a large business as many want to take advantage of a global audience. As digital advertising grows, so does the need to make sure that the adverts are effective and have high-quality impressions, ensuring ad placement verification and engagement metrics.

No one wants to waste money on bad advertising, or what some in the industry would refer to as traffic quality issues, which includes invalid traffic (IVT) and poor ad performance metrics.

However, the more opportunities for digital advertising, the more opportunities there are for ad fraud and ad fraud prevention. Thus, the need for ad verification arose to ensure brand safety and combat malvertising prevention.

Both marketers and publishers want ad campaigns that are effective, have great advertisement design so as to reach the target audience and positively affect them, and do not send the wrong message or unwanted content associations to them. This is where ad verification, including viewability measurement and third-party verification services, comes in.

But what is it and why should you care about it?

What is Ad Verification?

In the early days of digital advertising campaigns, things used to be quite simple. Websites were cleaner and there were fewer advertisement formats.

In general, when a business placed an ad online, it was almost certain to get views and CTR (Click-through Rate) along with guaranteed ad traffic quality.

Nowadays, there are several thousand websites and different ad formats like display ads, video ad verification, and programmatic advertising. Not to mention the many hackers that create havoc for advertisers and campaigns, affecting the ROI in advertising and increasing the importance of real-time bidding (RTB) integrity.

This means that even when advertisers spend money on a digital ad, viewers don’t always see it due to issues like geo-targeting accuracy or programmatic advertising challenges. To overcome these challenges, businesses turned to ad verification technology that checks their advertisement for any discrepancies, such as cross-platform ad verification that hinders optimal ad delivery.

The ad verification process checks the quality and visibility of ad impressions in real-time, utilizing ad performance metrics.

It makes sure that an advertisement appears on the right website, at the right time, and at the right spot. It ensures that the advert is seen by real people, not bots, in the correct context and by the target audience, effectively ensuring compliance and transparency while addressing cookieless tracking and media quality verification.

Why are Ad Verification Solutions Important?

Ad verification solutions are very important to protect marketers and their ad spend and to ensure ad campaign monitoring and traffic quality. But it is also important because it protects advertisement publishers and their advertising standards, maintaining media quality verification and advertising transparency.

Ad verification protects in eleven main ways:

- It protects the brand image of a business, ensuring brand safety.

- It boosts ad campaign performance by improving engagement metrics and click-through rates (CTR).

- It makes sure that their advertising dollars are well spent, avoiding invalid traffic (IVT).

- It makes sure the brand is safe from unwanted content associations, thus upholding contextual advertising principles.

- It ensures a quality impression, which includes viewability measurement and real people engagement.

- It protects from potential consumer privacy conflicts, staying ahead of privacy compliance.

- It protects ads from websites with bad UIs, ensuring a quality ad inventory.

- It prevents fraudsters from interfering with advertisements by implementing click fraud prevention.

- Ensures that a digital ad matches the specified terms for an ad campaign, promoting ad placement verification.

- Surveys ad exchanges and ad networks to uncover ad buys that do not meet the standards of the advertiser, contributing to ad exchange integrity.

- Provides advertisement reporting, a critical component of ad spending audit.

As digital advertising gains traction, so does advertisement fraud. Some set up fake websites and simulate a large amount of traffic so advertisers pay to display ads there, which involves dealing with bot traffic detection.

Or hackers redirect users to a different location after clicking on an advertisement, effectively becoming a privacy legislation issue. This reduces the amount of income the business gets, necessitating malvertising prevention.

Other problems include ad placements being near offensive content, affecting the brand image, which calls for stringent ad compliance.

Or the placement of the ad is such that it is not being viewed or it does not capture consumer attention. It’s clear that many problems can arise when it comes to online advertising, including issues with geo-targeting accuracy and ad delivery validation.

The digital advertising industry wants to optimize its ad spending. So their focus is on creating new ad quality standards and ensuring campaign effectiveness.

They are also determined to make digital advertising transparent and accountable, fostering advertising network trust.

Verification companies help marketers make smart decisions with their ad spend. They help advertisers navigate privacy legislation laws and confront cookieless tracking challenges.

They also build confidence in the digital ecosystem. Ad verification could change how marketers buy media through demand-side platforms (DSPs) and supply-side platforms (SSPs), and how advertisements are valued, considering factors like ad server auditing and header bidding.

How Does Ad Verification Work?

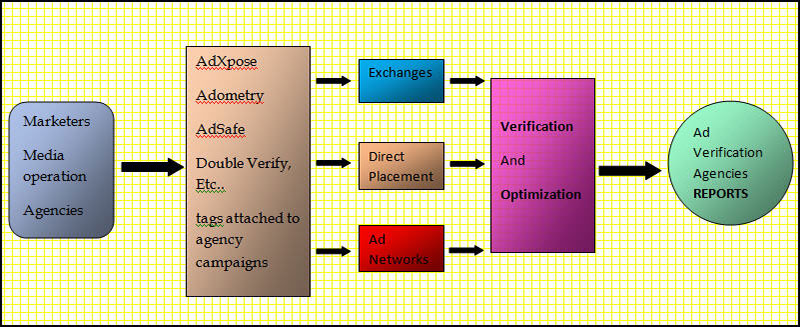

Ad verification works by deploying verification tags or beacons inside the ad markup, a process integral to ad placement verification.

The verification tag analyzes the content of the publisher’s page, the location of the ad, and how many viewers see it, which are key factors in viewability measurement. Then, it sends a report to the advertiser or agency so they can analyze the data and assess traffic quality and ad visibility.

The point of ad verification is to have an impartial third party verify the delivery of the ads, often facilitated by third-party verification services. In general, the advertiser will have a separate login than the verification vendor, which ensures independent ad verification and compliance.

Or sometimes it is more seamlessly integrated with a demand-side platform (DSP), enhancing the programmatic advertising efforts.

Ad verification cannot prevent advertisements from showing up in undesirable places. But it can fend off hackers, frauds, and publishers with little visibility, thereby preventing invalid traffic and malvertising.

It’s possible to block tracked ads from showing up on certain pages. This happens if a page does not have the right context according to the parameters laid out for an ad campaign, including contextual advertising compliance.

Those parameters include the geographical location and the surrounding content. Ad verification helps the ad campaign in its early stages by blacklisting pages and publishers known for low visibility or fraud, and by ensuring ad fraud detection and geo-targeting accuracy.

Ad verification companies provide regular reports of the data collected from tracked advertisements, which are essential for advertisement reporting and ad performance metrics.

These reports highlight the blocked pages and ad calls. This allows publishers to troubleshoot or adjust ad placement, upholding advertising standards and brand safety.

Who Benefits from Ad Verification?

Verifying ads protects marketers. It helps them use advertisers’ money in the best way possible, contributing to an optimized ad spend and ad buying decisions.

But it also benefits publishers in various ways, ensuring transparency and accountability, and fostering ad exchange integrity.

How Advertisers Benefit:

- It protects their marketing budget, essentially guarding their ad spend and ROI in advertising

- It ensures that campaigns are as effective as possible at capturing consumer attention, boosting ad campaign performance and click-through rates (CTR)

- It ensures that ads are being displayed according to an agreed-upon contract, ensuring compliance and transparency

- It encourages publishers to be honest and transparent about their website traffic, promoting a culture of accountability and cross-platform ad verification

How Publishers Benefit:

- It gives them better control of the advertisements they run on their website, adding an automated ad quality enforcer

- It helps minimize the risk of running fraudulent ads on their site, serving as a layer of ad fraud prevention and malvertising prevention

- It protects their users from any unnerving and harmful experiences. Thus, it fortifies the relationship between website owner and viewer, contributing to brand safety and consumer privacy protection

- It provides an automated ad quality enforcer, offering real-time ad impression quality checks and media quality verification

Because of its security measures, ad verification protects advertisers, publishers, and internet users, creating a more transparent and accountable digital ecosystem, ensuring advertising network trust and programmatic advertising transparency.

What Ad Verification Offers to Ad Impressions

Brand Safety and Brand Suitability

It is important to understand the difference between brand safety and brand suitability. How does it affect you?

Brand safety is a strategy to prevent negative brand perception and ad placement discrepancies. It is a proactive strategy that aligns with brand image maintenance and advertisement content integrity.

It seeks to avoid harmful influences and unwanted content associations from neighboring undesirable content, which is crucial for maintaining ad quality standards.

Brand suitability takes a different approach. The brand suitability strategy focuses on understanding the context of ad placements and audience engagement, going beyond mere ad fraud detection.

It involves understanding where ads will appear, how viewers will interact with them, and how they engage the audience, promoting brand image and context, which is vital for targeted ad delivery and contextual relevance.

Ad verification companies have established categories. These categories help advertisers to define content that is undesirable for their brand, ensuring brand safety and suitability, and ad content verification.

This ensures that their ads are not displayed next to such content, supporting ad compliance and brand protection. Some of those categories include:

- Adult content

- Copyright infringement content

- Weapons

- Violence

- Hate

- Profanity

Fraudulent Activity

Fraudsters have taken full advantage of digital tools. Fraudulent advertisements and traffic, which undermine traffic quality issues, have plagued the internet since its beginning, affecting ROI in advertising.

All involved in marketing should eliminate malicious ad content as fast as possible. This is because it impacts revenue and trust, and is a serious threat to digital ad trust.

Ad verification helps manage the risk of running fraudulent ads, offering a layer of ad fraud prevention and malvertising protection.

It protects publishers from running the ad and potentially hurting their viewers, as well as advertisers from fraudsters diverting consumer attention to other places, affecting CTR (Click-through Rate) and media buying efficiency.

Fraudulent activity affects both direct and exchange campaigns, influencing ad buying and media value, and may involve impression laundering or geo-targeting fraud.

Publishers are the last link between marketers and clients. So their load is greater in minimizing malicious ads and maintaining advertising standards, crucial for cross-platform ad verification.

Publishers are also responsible for third-party and demand-side platform (DSP) campaigns, where ad verification technology becomes essential in combating programmatic fraud. Some fraudulent activities include:

Hidden and Invisible Advertisements

Hidden advertisements are invisible on the website. But the ad impression is still reported, affecting traffic quality and ad delivery and is a direct threat to ad viewability.

Impression Laundering

This fraudulent activity conceals the real website that is displaying the ad, an issue related to transparency and compliance, and impedes transparent ad verification.

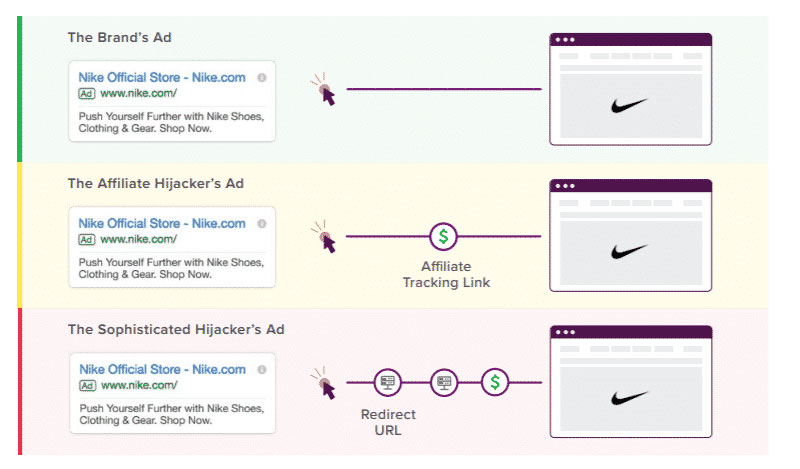

Ad Hijacking

Also known as advertisement replacement or malvertising. This means that malware hijacks the ad slot on a website, a critical issue for ad spend and ROI in advertising, leading to reduced digital ad effectiveness.

It displays an ad and generates revenue for the attacker instead of the rightful owner of the ad or the owner of the website, affecting the site’s revenue and trust, a serious threat to online ad integrity.

Click Hijacking

This is similar to ad hijacking, or more technically click fraud. An attacker hijacks a user’s clicks, essentially disrupting the CTR (Click-through Rate) and ad campaign performance, which is a major challenge in digital ad tracking.

Popunders

This attack uses pop-unders, a form of intrusive advertising. These are similar to pop-up windows except that they appear behind the main web browser instead of in front of it, influencing ad delivery and traffic quality, hence affecting the quality of ad impressions.

So the advertisement appears behind the main window, affecting its viewability and audience engagement, presenting a hurdle for engagement metrics and display ad optimization.

Bot Traffic

Botnet traffic consists of compromised computers or a set of cloud servers and proxies. The bot traffic generates revenue by faking clicks and gaming attribution models, complicating ad fraud prevention and skewing ad performance metrics.

Fake Users

Fraudsters can use desktops, laptops, and mobile apps to imitate human activity. They build large audiences of fake users, undermining transparency and accountability in digital advertising, and affecting audience targeting accuracy.

Fake Installs

Fraudsters use install farms to imitate human activity, a type of performance ad fraud. They use teams of real people to install and interact with apps, affecting both direct and exchange campaigns, a fraudulent practice that distorts app advertising analytics.

Attribution Manipulation

Malicious pieces of code run programs or perform an action, a form of conversion fraud. They send clicks, installs, and in-app events that never actually happen, compromising ad tracking and ad data integrity.

They are sent to an attribution system and take false credit for user engagement, a concern for transparent reporting and ad verification standards.

Viewability

Viewability refers to whether an ad is in a viewable space within the browser according to pre-established criteria, which is key for ad buying and optimizing media value. This criterion may include the percentage of ad pixels visible or the duration the advertisement remains in view.

Viewable space is not limited to ‘above the fold’ placement; it’s more about ensuring the ad is positioned where the target audience is most likely to see it, thereby enhancing brand engagement and relevance.

For instance, if the target audience frequently engages with content at the bottom of a webpage, then strategically placing the advertisement there may yield a better impact, especially if it aligns with contextually relevant content and is where the audience spends significant time.

Several tactics, such as geo-targeting for location-based audiences, are instrumental in ensuring effective ad placement and enhanced audience targeting.

Geo-Targeting

Geo-targeting, also recognized as Location-Based Advertising, is a strategic approach to personalize advertisements to achieve higher engagement and viewability metrics.

This technique can strategically place ads at the point of sale, driving customer behavior and in-store traffic, and is integral to sophisticated marketing strategies that bolster in-the-moment ad relevance.

It capitalizes on pinpointing the audience’s location to present them with ads that resonate with their geographic context, an aspect of programmatic ad buying that leverages automated quality controls.

The Verified Opinion on Ad Verification

The performance of advertisements and brand perception are impacted by multiple factors, including ad fraud, inappropriate content placement, and ads not being seen by the intended consumers, which together can significantly affect ad effectiveness and ROI.

Ad verification tackles these challenges head-on, providing benefits crucial for ad fraud mitigation and improving advertising ROI. These advantages include:

- Elevating ad performance by ensuring effective ad impressions.

- Securing placement in viewable spaces to boost audience reach.

- Guaranteeing that ads are accompanied by suitable content, thereby protecting brand reputation.

- Confirming that ad display complies with contractual agreements.

- Combating ad fraud, thereby safeguarding digital advertising integrity.

- Maximizing marketing budgets through targeted ad placement.

- Promoting website traffic transparency, vital for maintaining a trustworthy digital advertising ecosystem.

Ad verification is pivotal in curtailing harmful and fraudulent activities, ensuring robust ad tracking and data accuracy. It upholds the interests of advertisers, publishers, and viewers by fostering a secure and reliable digital advertising landscape.

FAQs about Ad Verification

What Exactly is Ad Verification, and Why Should I Care?

Ad verification, huh? Picture it like a bouncer at a club, but for ads. It’s all about making sure your ad shows up in the right scene—no bad neighborhoods or sketchy back alleys of the web.

If you’re pouring cash into ads, you wanna be darn sure they’re popping up where your future customers can see them, not lost in some digital void or, worse, chilling next to something that’ll make your brand look bad. It’s about keeping things legit and your brand’s rep shiny.

Can You Break Down How Ad Verification Works?

Alright, think of it like GPS for your ads. When you send out an ad into the wild, ad verification tracks it, makes sure it’s in the right spot, not someplace it shouldn’t be.

This tech digs into the details, checking the content around the ad, who’s seeing it, and if it’s actually being seen at all. It’s like a detective meets quality control, all about getting your money’s worth and keeping your ads clean.

What’s the Difference Between Ad Fraud and Poor Ad Placement?

So, ad fraud’s like someone stealing your billboard space and using it to sell their own stuff—that’s outright theft. Poor ad placement?

That’s your ad ending up on a billboard in the desert where no one’s around to give it a glance. One’s about someone gaming the system, the other’s about just missing the mark on where your ad should be. Both hit you in the wallet, but they’re different beasts.

Is Ad Verification Really Worth the Investment?

Totally! It’s like insurance for your ad spend. You wouldn’t buy a car and not insure it, right? So why risk your ad budget?

It keeps your ads away from dodgy content and ensures you’re not throwing money at invisible ads that no one ever sees. In short, it’s about making every penny count, getting your message out there, and keeping your brand’s image spotless.

How Do Ad Verification Companies Protect My Brand?

These guys are your brand’s bodyguards online. They roll up their sleeves and dive into the gritty details, scanning where your ad’s gonna show up, who’s gonna see it, and what kind of content it’s rubbing shoulders with. They’re all about making sure your ad isn’t partying with the wrong crowd and that your brand stays as clean as a whistle.

Can Ad Verification Impact My Marketing ROI?

Oh, absolutely. It’s like making sure your arrows hit the target. If your ads are in the right place, seen by the right folks, your ROI could skyrocket.

You’re not tossing your cash into a black hole—you’re investing it in ads that work, that get seen, and that drive sales. It’s all about getting bang for your buck.

Will Ad Verification Slow Down My Campaign Launches?

Nah, it’s more like a pit stop in a race. You might think it’ll slow you down, but actually, it’s there to make sure you’re good to go—tyres checked, fuel topped up.

These checks make sure you don’t hit a speed bump down the line with your campaign that could cost you big time. It’s about being smart, not fast.

What Kinds of Ads Can Be Verified?

Pretty much any digital ad you can think of—banners, videos, mobile, you name it. It’s not just about whether the ad can be seen; it’s also about making sure it’s in the right environment.

Whether your ad is a flashy video on a high-traffic site or a subtle banner on a niche blog, ad verification has got your back.

How Does Ad Verification Address the Issue of Viewability?

Alright, so viewability is about whether real humans can actually see your ads. It ain’t just about your ad being there; it’s about it being in the line of sight.

Ad verification tools measure this stuff—like how much of your ad is showing and for how long. It’s about making sure your ads aren’t just out there but that they’re actually making an impact.

What if My Ads Are Displayed in a Way That Doesn’t Align With My Brand Values?

That’s a biggie. Your ad might end up next to content that’s like oil to your water—doesn’t mix well. Ad verification steps in like a referee, blowing the whistle before your ad cozies up to something that could give your brand a black eye. It’s about aligning your ads with content that vibes with what you stand for, keeping your brand identity tight and right.

Conclusion on ad verification

So, we’ve been talking about ad verification like it’s the new kale of the digital world – super good for you, even if you’re not entirely sure why you need it. But hey, stick with me here. It’s the behind-the-scenes wizard that keeps your ad’s reputation sparkly clean. Without it, you might as well be tossing your hard-earned money into a black hole, hoping it lands somewhere good.

But when it’s in play? Your ads are golden. They show up where they should, dodge the digital dodgy spots, and actually get seen by eyeballs that want to see them. It’s not just tossing a dart with a blindfold on; it’s more like having a homing beacon on that dart so it hits bullseye every time.

If you enjoyed reading this article on ad verification, you should check out this one about what is AdTech.

We also wrote about a few related subjects like MVP tests, personalization algorithms, how to hire a web development team, software development budget, financial projections for startups, financial software development companies, proof of concept vs prototype, and behavioral targeting.

- Python Explained: What is Python Used For? - April 26, 2024

- Reasons Why You Need a Reliable Host for Your Website - April 26, 2024

- Animate Your Ideas With Creative Apps Like FlipaClip - April 25, 2024